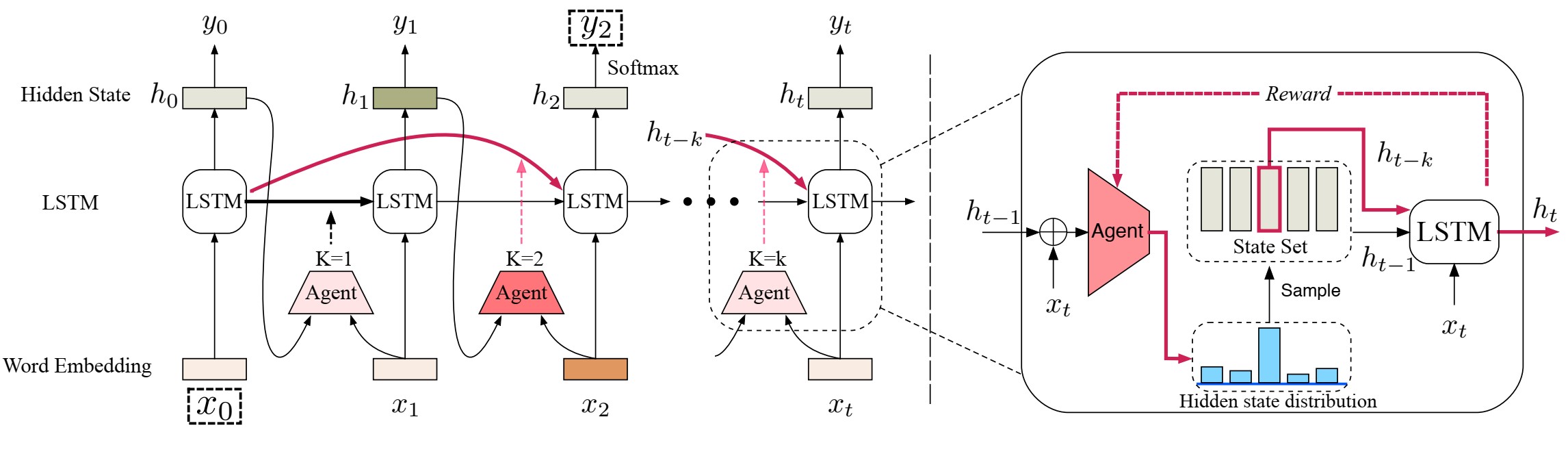

A dynamic skip connections mechanism is introduced to alleviate the lack of performance of LSTM on long-distance dependence. The dynamic skip connections mechanism can be used to directly connect two dependent words.

Since there is no label information of dependencies in the training data, we propose a new method based on reinforcement learning to automatically learn dependencies from the data.